Why everyone is Googling how to actually implement MCP

If you are building with agents in 2026, you have probably seen the same search box suggestions: how to wire an MCP server, which transport to use, how to stream tool results, and how to bolt several MCP backends together without turning your agent into a fragile pile of prompts. That burst of curiosity is not hype chasing. It tracks a real shift: the Model Context Protocol has turned into the default shape for “model meets tools and data,” and teams are past the slide deck phase. They need wiring diagrams and failure modes, not another acronym explainer.

Anthropic framed MCP in November 2024 as an open standard for connecting assistants to the systems where information lives, with specification work, SDKs, Claude Desktop support for local servers, and an open catalog of starter integrations aimed at places teams already use every day. Community docs spell out what an MCP server can expose (tools the model can call, resources it can read, prompts packaged as reusable templates). Hands-on guides from the field add the bruises: stdout discipline under STDIO, picking Streamable HTTP over legacy SSE for streaming, and treating tool schemas like a public API so the model stops calling the wrong thing under pressure. (Sources: Anthropic MCP announcement, MCP documentation guide, Nearform MCP implementation guide)

The pitch behind MCP was never “install another plugin.” It was always about shrinking bespoke integrations into one protocol-shaped boundary so permissions, logging, and versioning work the way they already do for internal APIs. That is the workload migration teams are in now.

What landed when MCP went mainstream

When MCP broke cover as an open protocol, three pieces mattered for builders immediately: you could read a stable mental model in docs (tools, resources, prompts), you could run servers locally through Claude Desktop and ship against shared SDKs, and you could browse premade servers aimed at common stacks instead of inventing glue from zero each time. Vendor announcements also named early adopters and tooling partners, which signaled this was meant as shared plumbing across products, not a single-app experiment. (Sources: Anthropic MCP announcement, MCP documentation guide)

- Anthropic shipped MCP as an open standard on November 25, 2024, pitching universal hooks between AI systems and data alongside specification work, SDKs, Desktop-hosted MCP, and an open repository of servers targeting surfaces such as Google Drive, Slack, GitHub, Git, Postgres, and Puppeteer in the launch story. (Source: Anthropic MCP announcement)

- The official MCP documentation stack describes the protocol as the bridge between LLMs and external capability: callable tools, readable resources, and first-class prompt templates so clients know what they can offer models beyond raw chat context. (Source: MCP documentation guide)

- Nearform’s implementation write-up steers teams toward official SDKs in TypeScript and Python, contrasts STDIO for local dev against HTTP-oriented setups for remote deployments, and notes SSE fading behind Streamable HTTP for streaming in production-minded setups while stressing observability and least privilege. (Source: Nearform MCP implementation guide)

- That same Nearform guide pushes a disciplined build path: one or two narrow tools first, validate schemas with inspectors before you involve an LLM, and name tools like endpoints so models cannot confuse overlapping responsibilities at scale. (Source: Nearform MCP implementation guide)

- Anthropic’s announcement paired ecosystem rhetoric with named adopters and IDE vendors, underscoring MCP as integration fabric others embed, not a Claude-only sidebar feature. (Source: Anthropic MCP announcement)

Why “implementation guide” became the headline

Once tools behave like APIs, you win the ability to test without mysticism. Backends can negotiate scope using plain nouns (tools, resources, prompts) instead of burying rules inside sprawling system prompts. Inspectors and dev CLIs let you prove the server speaks MCP correctly before the model layers ambiguity on top, which is why Nearform stages debugging without the LLM first when something breaks in production. Anthropic’s packaging of SDKs, Desktop pathways, and starter servers also lowered time-to-first-working-tool, which naturally steers search traffic toward tutorials that mirror production constraints rather than conceptual essays. (Sources: MCP documentation guide, Nearform MCP implementation guide, Anthropic MCP announcement)

Treat each tool like a small POST endpoint: tight JSON schema, obvious failure messages, and expansion only after the narrow path works. Nearform frames that sequence as the gap between infrastructure you can debug and “prompt soup” nobody can reproduce.

Where MCP gets fragile in the real world

The protocol does not delete security work; it moves it. STDIO servers share one pipe for protocol frames, so logging to stdout corrupts sessions while stderr or structured logs stay safe. Remote setups hit reverse proxies, TLS termination, and CORS exactly like other streaming APIs. Multi-tenant deployments wrestle with tokens scoped per user instead of one mega-credential for every call. Nearform also sketches meta-servers that consolidate multiple backends behind one coherent tool catalog so routing, auditing, and rate limits stay centralized while the model sees fewer overlapping tools. Anthropic’s launch language emphasized secure two-way links between data and tools, which is code for “your governance team will review MCP like any surface that touches production data.” (Sources: Nearform MCP implementation guide, Anthropic MCP announcement)

Patterns worth stealing early: least privilege on databases and filesystems, parameterized queries, directory allowlists, and saying no to arbitrary shell unless it lives inside a real sandbox.

A practical checklist before you widen scope

Pick the transport your production clients actually support. Nearform contrasts spawning STDIO locally with HTTP-oriented stacks for remote peers and highlights Streamable HTTP ahead of deprecated SSE when you need streaming semantics that survive proxies. Hammer schemas early: overlapping tools with fuzzy descriptions look like “the model is dumb” when the contract is ambiguous; enums, bounds, and stable outputs beat heroic prompting. Keep prompts, tools, and resources linguistically aligned across engineering and security so nobody argues past each other in review. If HTTP MCP is on your roadmap, rehearse curl checks, TLS paths, load balancer buffering, and CORS early because long-lived streams fail for boring infrastructure reasons. Anthropic’s ecosystem positioning still matters because official SDKs and Desktop onboarding anchor newcomers before fork drift sets in. (Sources: Nearform MCP implementation guide, MCP documentation guide, Anthropic MCP announcement)

Operator note (first-hand): On 2026-05-02, the public Anthropic MCP announcement page still carried the November 25, 2024 dateline and the bundle of spec or SDKs, Desktop-backed MCP, and an open server repository described at launch, consistent with the sourcing above without signing in. (Source: Anthropic MCP announcement)

Skip the plugin mentality. Ship narrow tools with explicit contracts, prove them without the model in the loop, then widen surface area once traces stay clean. That mirrors both Nearform’s debugging sequence and Anthropic’s push to retire fragmented one-off connectors for something consistent enough to trust in production. (Sources: Nearform MCP implementation guide, Anthropic MCP announcement)

How this fits the wider MCP beat on AgenticWire

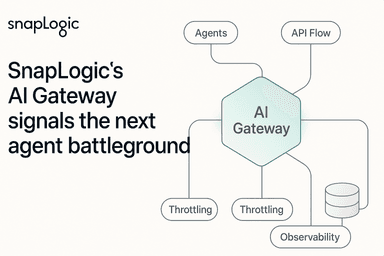

AgenticWire has covered MCP as capability and as risk: Microsoft’s Agent Framework lined graph workflows up next to MCP while signaling more protocol work ahead, and enterprise stacks increasingly talk about MCP endpoints as governed surfaces rather than hobby scripts. Platform vendors now bake MCP into CX and automation stories as well. That backdrop explains why implementation content sits next to security reviews instead of living only in Discord threads. When regulated data meets agents, protocols compress bespoke glue but concentrate blast radius unless privilege and logging keep pace; that tradeoff is now visible whenever procurement asks for architecture diagrams instead of demo GIFs.

Further reading on AgenticWire:

- Microsoft Agent Framework 1.0 ships graph workflows and MCP, with A2A next covers Microsoft’s graph plus MCP positioning for builders tracing vendor moves.

- MCP STDIO risk: when config becomes command execution walks through how MCP config can turn into command execution if operators treat STDIO servers like benign scripts.

- MCP security reality check: CSA write-up on OX’s “MCP by design” RCE issue summarizes independent analysis when MCP’s flexibility meets hostile inputs.

Adoption notes

Ship one meaningful tool end to end, prove it with inspectors, then grow breadth. When inspectors fail, fix the protocol surface before you blame the model. For remote deployments, validate proxies and TLS paths early because streaming transports stumble on naive buffering. Keep Anthropic’s ecosystem map in mind: official SDKs and Desktop onboarding remain the anchor that keeps teams aligned before forks drift. Shared vocabulary across tools, resources, and prompts keeps security and product reviews from talking past each other. (Sources: Nearform MCP implementation guide, Anthropic MCP announcement, MCP documentation guide)

Related coverage

- Microsoft Agent Framework 1.0 ships graph workflows and MCP, with A2A next

- MCP STDIO risk: when config becomes command execution

- MCP security reality check: CSA write-up on OX’s “MCP by design” RCE issue

- Adobe launches CX Enterprise for auditable agentic workflows with MCP endpoints

References

- Anthropic MCP announcement - https://www.anthropic.com/news/model-context-protocol

- MCP documentation guide - https://modelcontextprotocol.info/docs/quickstart/guide/

- Nearform MCP implementation guide - https://nearform.com/digital-community/implementing-model-context-protocol-mcp-tips-tricks-and-pitfalls/